There are currently hundreds of extremist videos available on YouTube.

Researchers at the right-leaning The Henry Jackson Society have identified 61 far-right extremist videos and 60 live Islamic extremist videos on the site, which they claim to have flagged to the company.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

They include:

- Video entitled “Adolf Hitler was right” with praise of Hitler over images of Jewish families being taken to concentration camps (flagged 1st August, still live on 18th September)

- Video of a man filmed slapping a Muslim teenager with bacon and shouting “Isis scum” (flagged 12th July, still live on 18th September)

- Video of burning buses, celebrating the deaths of Shia Muslims described as Kafir (flagged on 12th July, still live on 18th September)

- Video of a child singing over images glorifying terrorism (flagged on 21st July, still live on 18th September)

- Promotional material from the Taliban (flagged on 29th August, still live on 18th September)

Yvette Cooper MP, who commissioned the report and is chair of the Home Affairs Select Committee, said:

YouTube have promised to do more, but they just aren’t moving fast enough. Google, which owns YouTube, is one of the richest and most innovative companies on the planet. They have the resources and capability to sort this and they need to do so fast.

Alan Mendoza, executive director of the Henry Jackson Society, said:

Research from our database of Islamist terrorist offences from 1998 to 2015, a total of 269 profiles, has found that in over a third of offences (35 percent) the internet was cited as a major site for the offender’s engagement with extremism and terrorism. These ideologies can be freely disseminated and amplified online and there is room for improvement by technology firms to provide spaces to expose and debate their inconsistencies.

Meanwhile, in a separate piece of research out earlier this week Policy Exchange think-tank analysts found Islamic State is producing, at a conservative estimate, about 100 items of new content each week, including execution videos and bomb-making instructions, despite big military defeats in Iraq and Syria.

Policy Exchange found that almost three quarters of the British public want large internet companies to do more to find and delete content that could radicalise people.

Later today UK prime minister Theresa May will be meeting her French counterpart, president Emmanuel Macron to discuss ways to tackle online extremism at the annual United Nations (UN) General Assembly.

The two leaders have come together to discuss the ways to tackle online radicalisation before. Back in June this year, May and Macron discussed creating a new legal liability which could see technology companies such as Google and Facebook facing fines for not removing extremist content on their platforms.

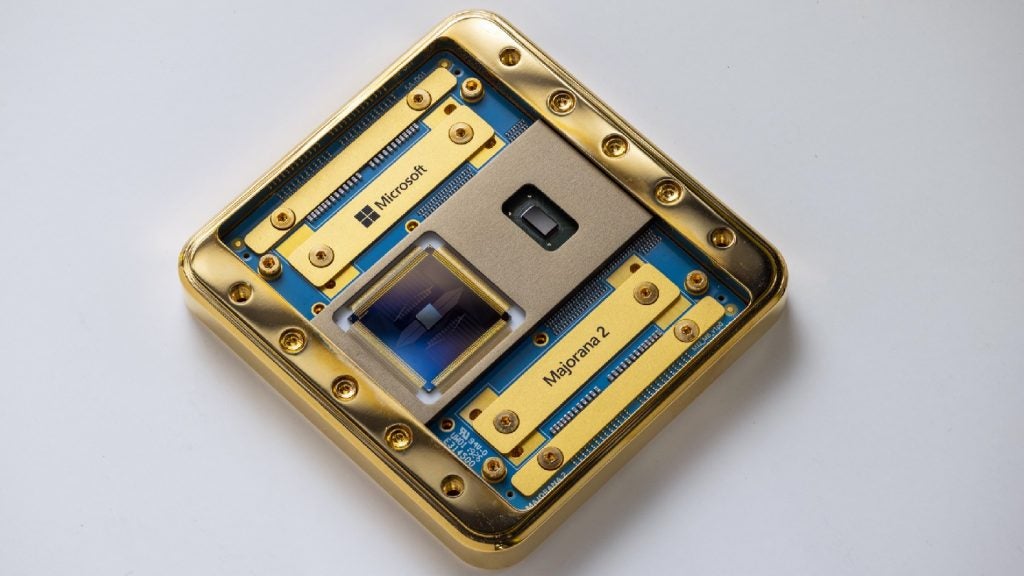

May will also meet senior executives from Google, Facebook and Microsoft on the sidelines of the UN meeting alongside her Italian counterpart, Paolo Gentiloni.

What more can be done?

Micro blogging website Twitter yesterday released its quarterly transparency report which said its internal controls were allowing it to weed out accounts being used for the “promotion of terrorism” earlier rather than responding to government requests to close them down.

Twitter said it had removed 299,649 accounts in the first half of this year for the promotion of terrorism, a 20 percent decline from the previous six months, although it gave no reason for the drop.

Three-quarters of those accounts were suspended before posting their first tweet. Almost all of these were spotted by Twitter’s spam-fighting artificial intelligence tools — meaning they were automatically removed because either the device, the location, or the user’s internet identity had been previously used for extremist content.

Other social media companies, including Facebook, are investing in automated technology to improve detection and take down rates of extremist accounts.