The Defense Advanced Research Projects Agency (DARPA) has announced the development of an AI-controlled robotic limb that can learn over time as part of a wider project targeting the development of true lifelong machine learning.

At present machine learning systems cannot learn continuously. They are instead taken offline and retrained whenever new capabilities are introduced. DARPA hopes to develop a system that will enable machines to continuously learn in the field, in the same way humans and animals do.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

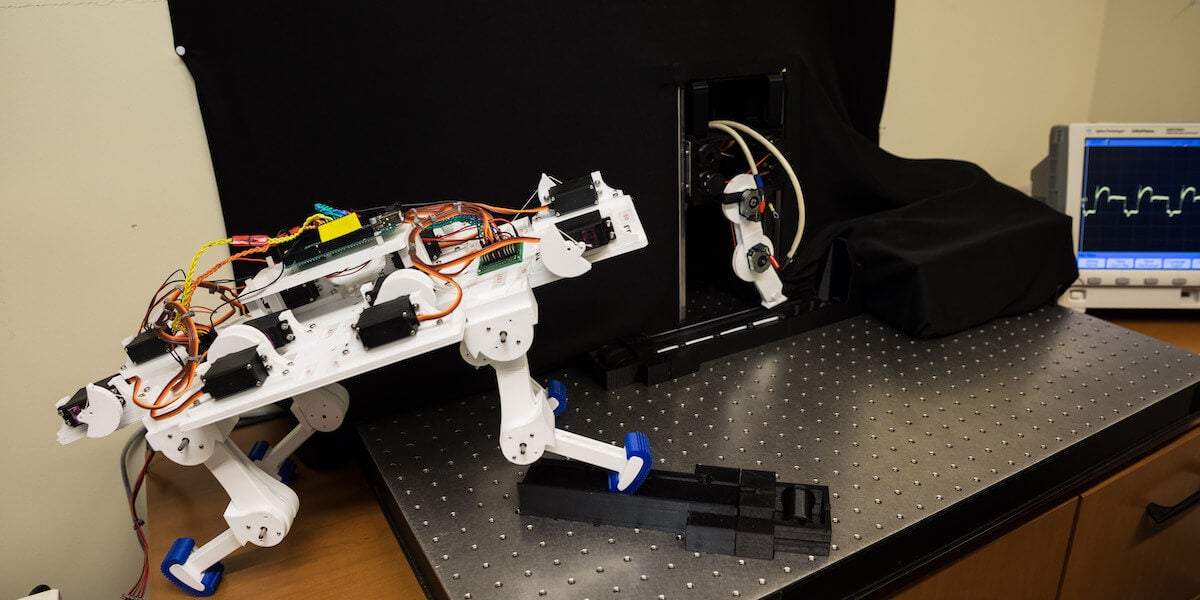

Now a more than year into a research project on the subject, DARPA has announced the development of an AI-controlled robotic limb equipped with tendons designed to mimic those of an animal.

Developed by researchers at the University of Southern California’s Viterbi School of Engineering, the robotic limb is designed to self-learn without needing to be taken offline through the support of algorithms based on biological systems.

Through its learning process, the robot has successfully taught itself to walk, and can recover from interference with its balance.

DARPA targets lifelong machine learning

DARPA’s plan to develop lifelong machine learning, known as the Lifelong Learning Machines (L2M) programme, was announced in 2017. It is hoped that it will lead to the development of AI that can learn in the field, allowing for robotics and systems that can adapt and change to evolving situations.

“The L2M program’s prime objective is to develop systems that can learn continuously during execution and become increasingly expert while performing tasks, are subject to safety limits, and capable of applying previous skills and knowledge to new situations, without forgetting previous learning,” explained Dr Hava Siegelmann, program manager at DARPA’s Information Innovation Office.

“Though complex, it is an area where we are making significant progress.”

While the robotic limb project, which is detailed in an article published in Nature Machine Intelligence this week, is an early effort, it demonstrates the value of what is known as “unstructured play” in achieving the goal of lifelong machine learning.

This involves the use of random movements, which allow the robot to learn about its own capabilities and how they relate to the wider environment.

This is a significant contrast to current dominant machine learning models, which involve pre-programming for potential scenarios.

It is hoped that over time the project will be able to build on this research to develop solutions that overhaul the very way that machine learning occurs – and shape AI’s development in the process.

“We’re at a major moment of transition in the field of AI. Current fixed methods underlying today’s smart systems will quickly give way to systems capable of learning in the field,” said Siegelmann.

“The missing ingredients to safer, more flexible, and more useful AI are the abilities to both learn while in operation and to apply learning to new circumstances for which the system was not previously trained.

“These abilities are necessary, for instance, for complex systems like self-driving cars to become truly functional. Incorporating L2M technologies will allow them to become increasingly expert as they drive in different conditions and will make them safer than human-driven cars.”