Meta Platforms has unveiled an array of new technologies aimed at bolstering its artificial intelligence (AI) capabilities.

These include the company’s first custom silicon chip to run AI models, an AI-optimised data centre design and a supercomputer for AI research.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

In a blog post Meta said: “These efforts — and additional projects still underway — will enable us to develop larger, more sophisticated AI models and then deploy them efficiently at scale.”

When prompted, AI models generate answers, called inference, that need to be processed in a specific way.

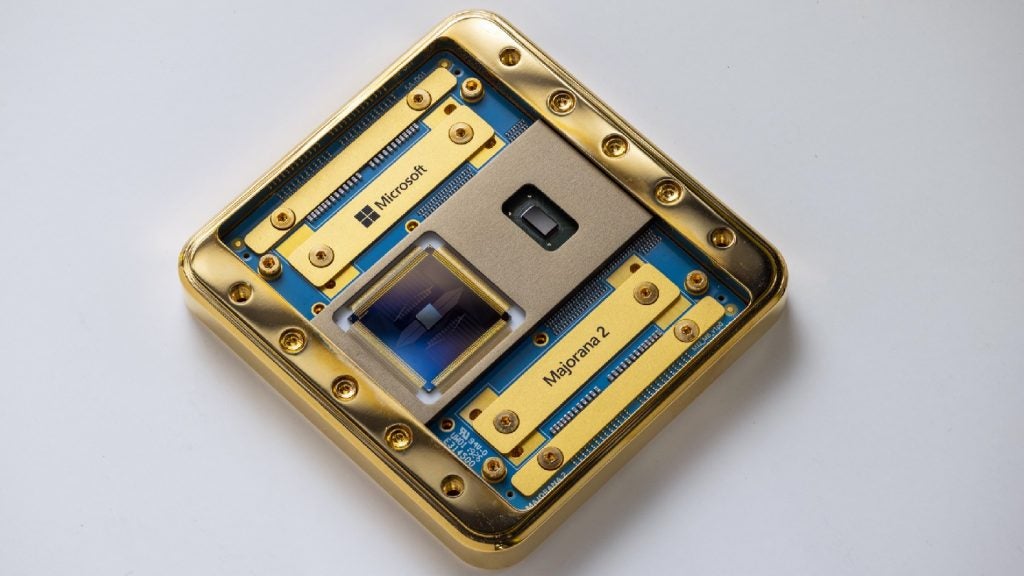

For inference tasks, the company designed its chip family called, MTIA (Meta Training and Inference Accelerator).

The chip, which is optimised for Meta’s internal workloads, is said to offer superior computational power and efficiency compared to CPUs.

By utilising both MTIA chips and GPU supercomputers, Meta hopes to enhance performance, reduce latency, and improve overall efficiency for each workload.

The company has also launched Meta Scalable Video Processor, its first in-house-developed solution to designed specifically for video processing workloads.

In an emailed statement to Bloomberg, Meta CEO Mark Zuckerberg said: “This work reflects long-term efforts that will enable even more advances and better use of this technology across everything we do.”

As the race for AI intensifies, Meta is trying to keep pace with its Research SuperCluster (RSC) AI Supercomputer.

Powered by 16,000 GPUs, the RSC is designed to train advanced AI models for various applications such as augmented reality, content understanding, and real-time translation.

In the coming decade, chip design will become more specialised, AI infrastructure will be tailored for specific tasks, deployment at scale will be facilitated by new systems and tools, and product support will become more efficient.

These advancements will enable the development of advanced models based on cutting-edge research and provide global accessibility to emerging technologies, the company said.