The Italian privacy watchdog the National Authority for Data Protection (NADP) became the first regulator to start an investigation into OpenAI’s ChatGPT last week. Debate turned to the inevitability of increased regulatory oversight to control the effects of the explosion in the use of generative AI, and the possibility that the measure could be followed by other Western democracies as the conversation around AI and ethics becomes more urgent.

The Italian data protection authority banned the popular chatbot over data privacy concerns, after finding out that it had suffered a cyber security breach on March 20, 2023. The incident had exposed, during a period of nine hours, the full names, as well as some credit card information, of users of the paid version of the service.

The investigation focuses on whether there has been a violation of EU law, specifically the General Data Protection Regulation (GDPR), which governs how businesses use, process, and store personal data. Additionally, the watchdog argues that ChatGPT does not have any filters to verify the age of its users, and that minors can be exposed to answers that may be unsuitable for their degree of maturity. As a result, it has asked OpenAI to respond within 20 days of the ban, detailing the steps it will follow to remedy the situation, or risk a fine equivalent to 4% of its annual turnover.

The debate around responsible AI is more contested than ever

The ban has generated considerable speculation, given the current hype around generative AI technology, as the business community and the public at large wonder about the repercussions of the extended use of the technology. Policymakers are facing an uphill battle in this area.

The EU has responded to the challenge with plans to enact the Artificial Intelligence Act, currently under debate in the European Parliament, which contemplates appointing a national regulator in every EU country to oversee AI policy.

On the other hand, the viability of enforcing such bans in practice has been questioned, since savvy users can simply resort to a VPN to circumvent controls such as the one imposed by Italy.

However, ChatGPT has been banned for months in many schools and universities, to combat plagiarism. Examples include prestigious universities such as Cambridge and Oxford in the UK, as well as New York City Public Schools, the largest school district in the US. While outright bans affecting AI technologies are widely seen as too extreme, it is worth highlighting that in this specific case, Italy’s authorities are merely following the letter of the law.

Regulating AI is an urgent problem

AI governance is becoming an increasingly complex issue, and the last month has witnessed intense discussion, with a letter signed by Twitter owner Elon Musk, and Yoshua Bengio, a notable name in deep learning, among other prominent technology leaders.

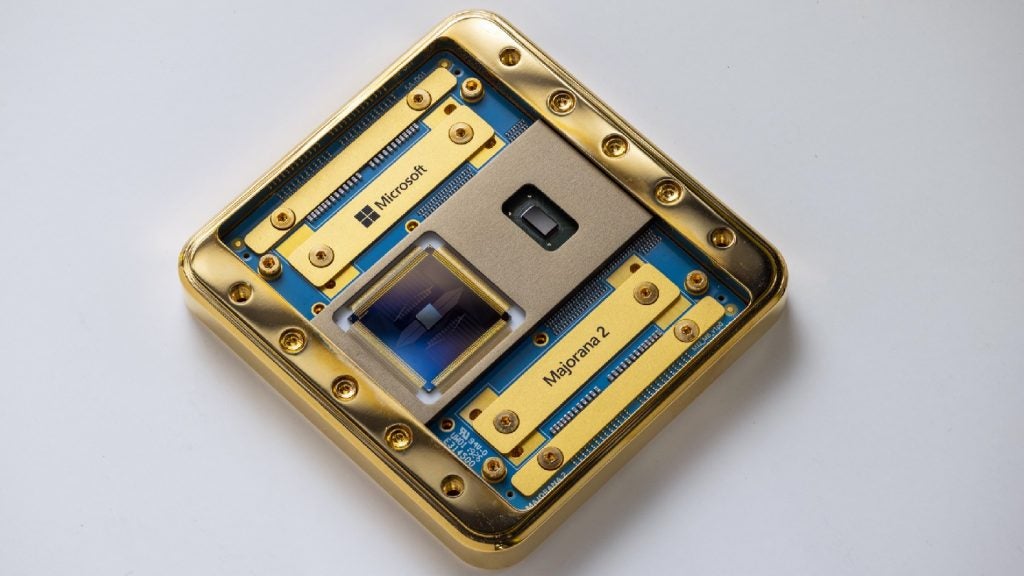

The signatories called on AI labs to immediately pause for at least six months the training of AI systems more powerful than GPT-4. The latest iteration of ChatGPT utilises 170 trillion parameters compared to GPT-3’s 175 billion parameters.

Whilst many defend the content of the letter, others have argued that it advocates halting technological progress. Some even say that a number of the signatories are simply researchers that have perhaps not done so well in their own commercial endeavours, and are now trying to steal the thunder of their more successful peers.

Responsibility and ethics

But AI responsibility and ethics, and the need for stricter governance of AI models, have been central to technological debates for years. Concerns about the massive pools of data swallowed up by GenAI models are entirely justified and far from just a ‘moral panic.’ They involve not only privacy and safety issues but also matters of intellectual property and copyright.

Finally, the fight against the racist and misogynistic biases of AI algorithms and software is now more urgent than ever, and certainly not a fad. Adequate legislation and governance are necessary requirements that must go far beyond mere ‘tick-box’ exercises. It remains to be seen whether governments, the business community and ultimately the public at large are ready for this challenge.