Researchers at the Carnegie Mellon University, US, have developed an automated process for transforming the content of one video into another more accurately.

The process could be invaluable for the film industry, but there are concerns the technology could be used to rapidly create so-called deepfakes, which often sees celebrity faces convincingly put onto the body of another.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

Deepfake, a combination of the words ‘deep learning’ and ‘fake’, have been proliferating on the internet for several years. Often, the technique has been used to create fake celebrity pornographic videos, as well as fake political videos. Deepfakes have been banned from notable platforms such as Reddit, Twitter and Imgur.

Carnegie researcher Aayush Bansal said that they developed the new process, called Recycle-GAN, to enable films to be produced more quickly and cheaply. However, he was open about the potential abuse of the technology for deepfakes.

“It was an eye-opener to all of us in the field that such fakes would be created and have such an impact,” he said. “Finding ways to detect them will be important moving forward.”

Previous examples of political deepfake videos include replacing the face of German Chancellor Angela Merkel with US President Donald Trump’s and Argentine President Mauricio Macri face with that of Adolf Hitler.

Last week, Congress quizzed Facebook COO Sherly Sandberg about the dangers of deepfakes on social media.

“Deepfakes is a new area, and as always we’re going to do a combination of investing in technology and investing in people,” she said.

How does it work?

The AI-driven technology typically sees a class of algorithms called generative adversarial networks (GANs) assist computers to apply the style of one image to another.

In a GAN, one aspect detects what is consistent with an image or style, while another learns how to create images or videos to match the style.

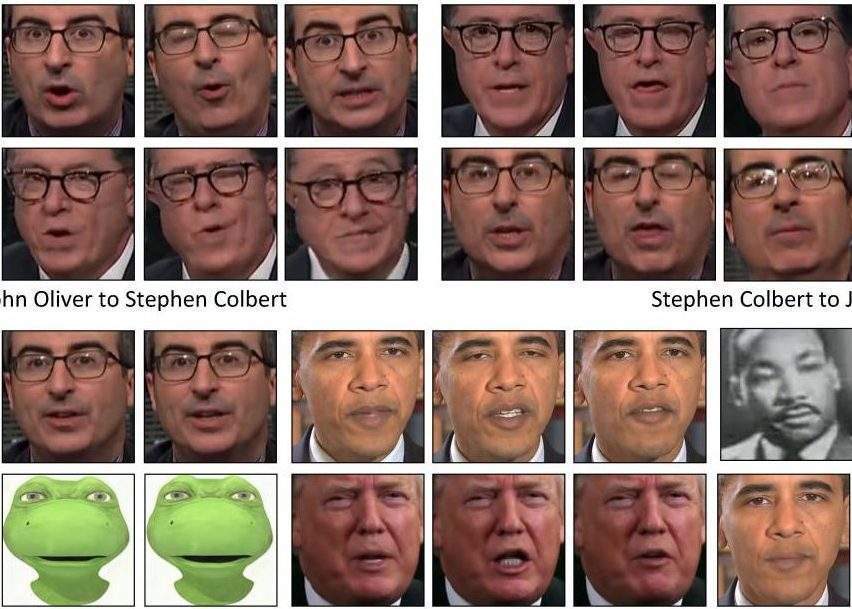

One variation of this technique, named cycle-Gan, converts the new video back to the original source to check its quality. The researchers compared this to translating English to Spanish then back into English. However, imperfections can appear when using this technique.

Carnegie Mellon researches built on this process to develop Recycle-Gan. Using AI, they factored in changes over time, in addition to spatial information.

The result is a video with more accurate facial expressions, movements and cadence. In one example, the research team used Recycle-GAN to transform a video of John Oliver into fellow comedian Stephen Colbert and back into Oliver.

Beyond deepfakes

Some have raised concerns that if the technology continues to advance, it will be impossible to distinguish fake videos from real.

However, Bansal suggests the technology could also prove useful for training autonomous vehicles to drive at night or in adverse weather.

According to Bansal, obtaining videos of such conditions in which objects can be identified and labelled can be difficult. Using Recycle-GAN, daylight footage containing hazards could be transformed into night scenes or adverse weather.

The technology is not limited to people, either. In another example, Carnegie researchers used Recylce-GAN to create time-lapses of synchronised blooming flowers.

But Bansal points to film production as the primary motivation for developing the method. Recycle-GAN could, for example, convert black and white video to colour automatically.

“I think there are a lot of stories to be told,” he said. “It’s a tool for the artist that gives them an initial model that they can then improve.”