Nvidia has added a new range of features to its high end chip for artificial intelligence (AI) and said it will begin roll out next year with Amazon, Google and Oracle.

The forthcoming H200 chip has been upgraded with more high-bandwidth memory, meaning that it will be able to process larger swaths of data at a quicker rate.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

Nvidia, which currently dominates the market for AI chips, said the H200 will hold 141-gigabytes of high bandwidth memory. A major increase from H100’s 80 gigabytes.

The US company’s chips power popular generative AI (GenAI) services that can respond to human prompts with human-like responses, like OpenAI’s ChatGPT. An increase in bandwidth memory will mean more intricate responses can be given by ChatGPT in a quicker time.

Nvidia said last week that Google Cloud, Amazon Web Services and Microsoft Azure and Oracle Cloud Infrastructure will be some of the key cloud service players to gain access to H200 chips.

The US chip maker reported revenues of $26.97bn for the fiscal year ending January 2023, an increase of 0.2% over 2022, according to GlobalData’s company profile database.

It comes as enterprises continue to be eager to incorporate GenAI into their business processes.

As GlobalData states in its Generative AI Watch report, applications ranging from “customer service, sales support, resume search, request for proposal preparation, fraud identification, code generation, information retrieval, and much, much more” are all looking to incorporate the technology.

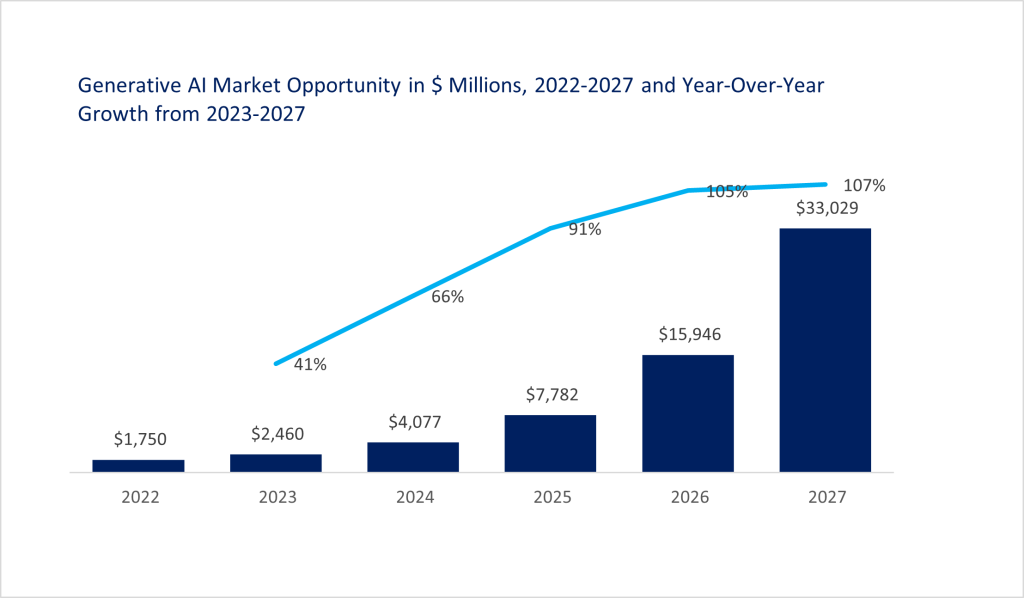

GlobalData says the revenue opportunity for GenAI is enormous, with the market expected to grow from $1.8bn in 2022, to $33bn in 2027, at a compound annual growth rate of 80%.