Deepfakes are on the rise. The number of manipulated videos on the internet jumped by 84% to nearly 15,000 between 2018 and 2019. This is according to deepfake detection company Deeptrace.

The technology could be the new frontier of fake news. It is capable of weaponizing content, exacerbating conflicts, and undermining democracies.

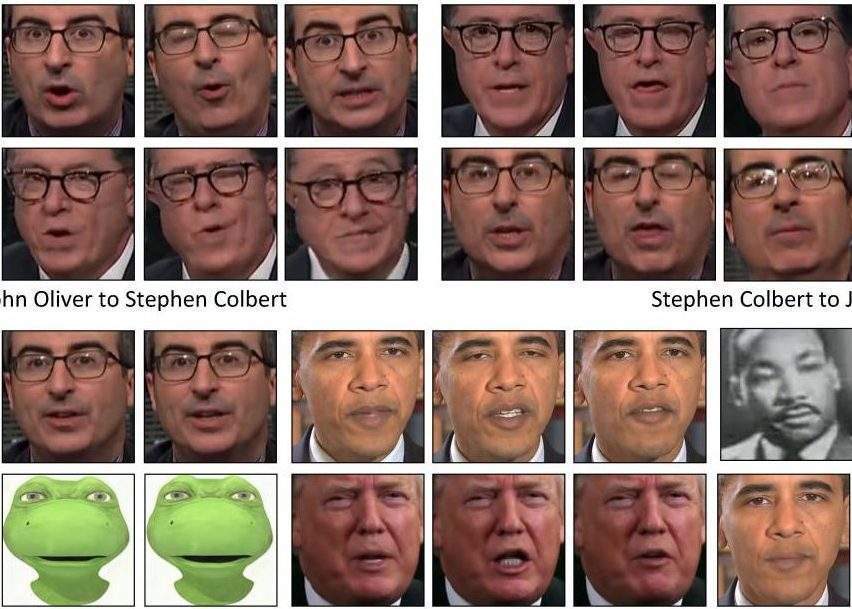

Deepfakes are videos that show a person saying or doing something that they never said or did. It uses AI-based software that transposes a digital facial image onto an existing video of a person. While the technology is not entirely new, it has recently become more sophisticated and accessible. Also, like fake news articles on social media, deepfakes are easy to share. They can potentially reach millions of viewers in seconds.

Deepfakes almost certain to be used in the next US presidential campaign

In the run-up to the 2020 US presidential campaign, deepfakes are almost certain to be used to spread misinformation. Candidates can expect to be targeted by manipulated videos in which they appear to say things that could harm their chances for election.

A corollary of the normalization of deepfakes is that public trust of all videos – including genuine ones – is eroded. This results in increased cynicism and apathy towards political campaigns and politics in general.

In line with their strategies to tackle online misinformation, big tech companies are stepping up their efforts to curb deepfakes. Facebook and Microsoft are collaborating with universities to create a large database of fake videos to study detection methods. Google has similarly built up a catalogue to enhance deepfake detection. Twitter recently acquired the start-up Fabula which uses deep learning to detect online misinformation.

Companies have a responsibility to remove deepfakes

These companies also have a responsibility to remove deepfakes from their platforms. Facebook has recently announced that it will use AI to identify and take down any intentionally misleading deepfake. However, the new policy will not apply to videos intended to be parody or satire, or any videos edited solely to omit or change the order of words.

In this interpretation, the ban wouldn’t cover a viral video showing the speaker of the US House of Representatives, Nancy Pelosi, appearing to slur her words, as it was edited without using AI.

More controversially, the company’s decision to not ban false claims in advertising, including political ads, means that technically it could allow political deepfakes to be shared during the presidential election campaign.

Legal challenges are on the way

Lawmakers across the world are also moving to tackle deepfakes from a legal standpoint. US states including California and Texas have enacted laws that make deepfakes illegal when they are used to interfere with elections.

At the federal level, Congress introduced a new bill aimed at regulating bad AI and requiring big companies to audit their machine learning systems for bias and discrimination.

In Europe, the UK released the Online Harms White Paper that establishes a duty of care for tech companies on audio and video misinformation. Finally, China has made it a criminal offense to publish deepfakes without disclosure.

Ethics need to be discussed

Both technology and legal approaches have shortcomings. For example, a video can go viral before experts and detection systems can determine whether it is a fake. Also, while AI regulation is advancing, it will be extremely hard to develop a one-size-fits-all-solution across different markets. As with another controversial AI technology, facial recognition, deepfakes are becoming part of our daily life without adequate consensus. Legal standards and regulations are critical, but what is lacking is a broader discussion about the values of good technology and the ethics that should inform its use.