Spending on the Internet of Things is expected to reach $745bn in 2019. With businesses becoming increasingly aware of how IoT can improve operations and more and more consumers turning to Alexa to turn on their lights, the industry is expected to continue to achieve double digital growth each year through to 2022, according to market intelligence firm IDC.

But what exactly is the Internet of Things and how did it go from futuristic idea to an industry approaching $1tn? Verdict looks back at the history of IoT:

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

What is the Internet of Things?

To understand where the history of IoT starts and why the creation of certain technologies was vital to the development of IoT development, first it is important to understand exactly what IoT means.

The Internet of Things refers to the collection of internet-connected sensors and devices used to monitor and control the environment, the products within it and the people that interact with it.

This includes virtual assistant devices such as Amazon Alexa or Google Home, as well as the numerous smart devices, such as plugs, lights, heating systems and security systems, that connect to them. It also includes larger appliances such as fridges, as well as a growing number of vehicles.

These items most commonly associated with the IoT, but technically the IoT consists of any device that connects to the internet, such as smartphones and tablets.

The IoT industry, an ecosystem of a range of technologies and companies, from microchip manufacturers to platform providers, is split into six broad segments. These are connected cars, automated homes, the industrial internet, wearable technology, smart metering and connected stores.

Read more: What is the Internet of Things and why does it matter for business?

A brief history of IoT: Where did it all start?

It’s difficult to pinpoint exactly when and where the history of IoT starts.

The idea of machines communicating with each other was first floated in the early 1800s and was technically first achieved in the 1830s with the creation of the electrical telegraph phone, which was able to achieve telecommunication over electrical systems and devices over great distances.

However, to achieve online communication between devices, first the internet needed to be created. Spawned out of a project started the the Defense Advanced Research Projects Agency (DARPA) in 1962, this has slowly evolved into the modern internet, a vast network that allows different smaller networks to communicate.

Fast forward to the 1980s, and the very first known IoT device was created.

Four Carnegie Mellon University graduate students, tired of trips to the nearest Cola-Cola vending machine only to find it empty, decided to modify the machine to track its contents. A computer board was fitted inside the machine and connected to the lights that came on to indicate when a certain product was out of stock. This was connected to the university’s computer science department main computer, which was connected to an early form of the internet, ARPANET. This meant that ARPANET-connected computers were able to monitor the machine and allow users to better plan their trips to the vending machine.

Why the Internet of Things?

The IoT name, however, wasn’t coined until 1999. The name came about through Kevin Ashton, then working on a project to optimise the supply chain at consumer goods manufacturer Procter & Gamble, in an attempt to excite senior executives about RFID (radio-frequency identification) technology.

Ashton proposed putting RFID smart labels, small embedded chips, on P&G products, which would allow the identification and tracking of specific objects throughout the supply chain. Knowing that the internet, then a buzzword itself, would excite them, he worked it into the title of his presentation to the board.

“They had no idea what I was going to tell them, but they knew the internet was a big deal,” Ashton explained to Tech Republic. “So if I could get the word internet into the title of my presentation, I could get their attention.”

Smart devices: Making IoT mainstream

Originally billed as a way for businesses to better manage their supply chains, businesses really started to get excited about IoT in the early 2010s, much like the anticipation surrounding artificial intelligence that is being experienced now. The history of IoT, at least as we now know it, starts around here.

It was the emergence of companies such as Nest Labs, the creator of the Nest smart thermostat, and the continued investment in personal assistant technology by US tech giants such as Amazon and Google, which has really brought IoT to the public’s attention in the last couple of years.

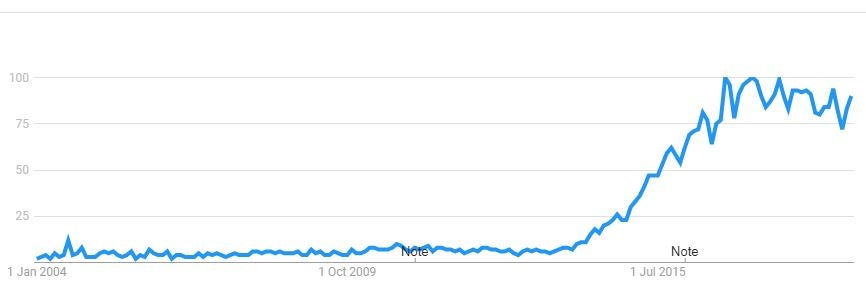

Google Trends shows that interest in IoT really exploded in 2014, before reaching its peak in late 2016. With voice assistants becoming cheaper and the range of smart devices available increasing, interest in IoT has remained high since.

The future of IoT: Connected everything

If the annual Consumer Electronics Show teaches us anything each year, it is that the world is becoming increasingly more connected. From smart toilets, to smart mirrors, to smart beds, more and more products and furnishings are being taken online.

According to Global Data, this is only set to continue as components get cheaper and performance gets better.

“The component and semiconductor segments will continue to deliver innovation, leading to improved capabilities on one hand, and lower cost on the other,” the business intelligence company predicts.

“These price and performance improvements will open up the economic case for new applications with sensors getting smaller, cheaper, and more pervasive.”