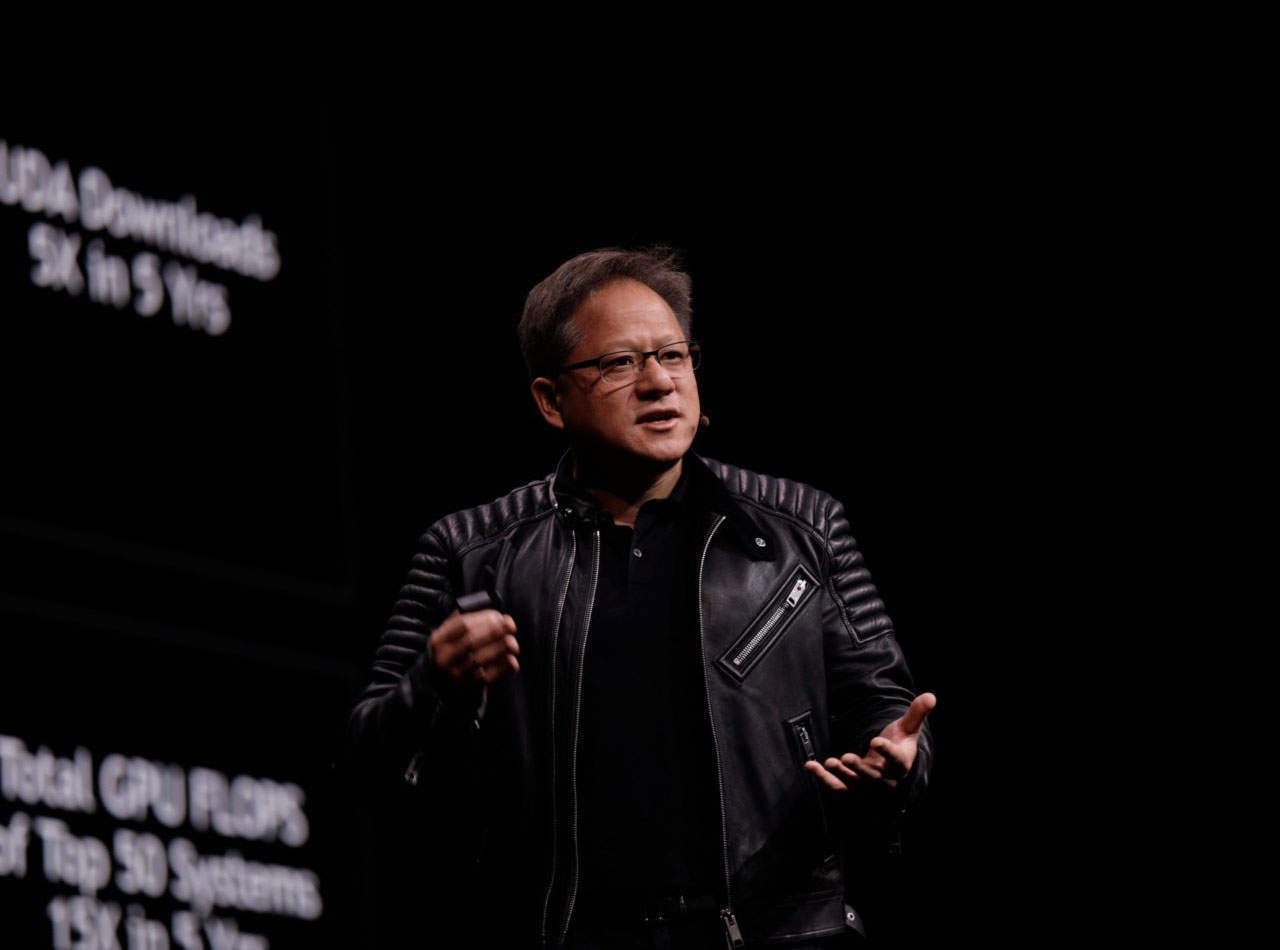

Moore’s Law is dead and we’re in the “era of the automation of automation”, says NVIDIA CEO and founder Jensen Huang.

Speaking to press at NVIDIA’s GPU technology conference in Munich, he said that both of these dynamics “control the computer industry today”.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

Moore’s law – the projection that the number of transistors in a computer circuit doubles every two years, and so increasing the power of computers – proved accurate for several decades. But in recent years, CPU power growth has slowed.

But that doesn’t mean that supercomputing will slow with it. Instead, Huang believes that the future of accelerated computing – essential for advanced AI programs – continues to lie with the graphics processor unit (GPU).

“The goal of accelerated computing is not just to make the chip go faster,” he says. “The goal of accelerated computing is to make the application go faster.”

That means improving the entire stack: the algorithm, software and processor.

Giving the example of an aeroplane delivering a package in a shorter amount of time, he says instead of focusing on making the plane go faster, you look at a different approach entirely, such as 3D printing the package at the destination.

The future of AI is in GPU accelerated computing

This GPU-boosted accelerated computing approach is already driving AI solutions across a myriad of markets, such as retail, telecommunications and autonomous vehicles.

In the case of a driverless car, that encompasses the chip, the system software, the architecture and the algorithms.

And that computing stack could be in mass production in the next two to three years, with NVIDIA announcing that Volvo has selected its DRIVE suite to power its level 2 autonomous cars.

Huang also believes that trials for robot taxis will probably start taking off in certain cities next year, with autonomous vehicles becoming “quite a large business” in the next 5-10 years.

Accelerated computing is enabling more advanced forms of AI, particularly benefiting deep learning over machine learning.

Machine learning, used by companies all over the world, requires a domain expert to pass knowledge onto an algorithm. This could be training an AI to recognise features – eyes, nose, face etc. – to identify an image as a human face.

By contrast, deep learning teaches itself to identify these features. Computers are writing software that humans simply couldn’t write, trying “every possible optimisation” on a supercomputer.

“It’s exciting because this is the era of the automation of automation,” he says. “Where software brought to us automation, this is now the automation of that.”

However, Huang insists that “the most powerful technologies should be open”. This mentality is reflected with Rapids, an open source library of AI software, announced this week at GTC Europe.

The GPU accelerated platform drastically improves the speed of data processing and has been trialled by Walmart for keeping track of its inventory. By cutting down the time it takes for data scientists to create forecasts of the stock it needs, it can cut down on wastage among its perishable goods.