The United Kingdom’s Digital, Culture, Media and Sport Committee has published its final report into disinformation and fake news, with Facebook at the centre of its fight to end “an era of self-regulation”.

The report focuses on Facebook’s numerous scandals of late, including the Cambridge Analytica scandal, in which data collected on millions of users through a third-party app were used to profile and target voters ahead of politics votes, and alleged Russian meddling in the 2016 United States presidential election.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

Mark Zuckerberg’s refusal to appear in front of the committee didn’t help, with the Facebook CEO accused of showing “contempt towards both the UK Parliament and the ‘International Grand Committee’”.

But with Facebook having made efforts to tackle fake news and the spread of disinformation, is the social media giant being unfairly singled out by regulators?

Facebook and the DCMS fake news report

“Although the report is aimed at social media in general, it is Facebook, and to a lesser extent, Google, that have been singled out,” said Richard Holway, Chairman of technology research and analysis company TechMarketView.

Facebook is mentioned on 501 occasions in the 109-page report. Twitter is mentioned 24 times. Google is mentioned five times and its YouTube video-sharing platform is mentioned three times.

Mark Zuckerberg is the only CEO of a tech giant to be named in the DCMS report.

That is despite recent studies finding that Facebook is doing a better job of dealing with the issue of fake news than other social media platforms.

In September, a team of researchers from Stanford University and New York University found that Facebook is outperforming Twitter at fighting against fake news to a significant extent. They analysed the social media performance of stories from fake news sources between January 2015 and July 2018 and found that interactions with fake news rose steadily until 2017. However, after then, Facebook interactions fell sharply, while interactions on Twitter continuing to climb.

“The ratio of Facebook engagements to Twitter shares was roughly steady at around 40:1 from the beginning of our period to late 2016, then fell to roughly 15:1 by the end of our sample period.”

Facebook fake news: Is the social media platform cleaning up its act?

The committee concluded that Zuckerberg had failed to show “leadership or personal responsibility” over fake news. However, the findings of the Stanford University-New York University study suggest otherwise.

The researchers concluded that the “efforts by Facebook following the 2016 election to limit the diffusion of misinformation may have had a meaningful impact”.

Following the Cambridge Analytica scandal, Zuckerberg announced that the social media platform would be changing its algorithm to show less content published by brands and more content published by friends and family.

“We feel a responsibility to make sure our services aren’t just fun to use, but also good for people’s well-being,” Zuckerberg said. “If we do the right thing, I believe that will be good for our community and our business over the long term too.”

While the decision wasn’t directly a result of the spread of fake news, showing users less news in general should also mean showing users less fake news.

Aside from that, Facebook has also worked with UK-based charity Full Fact to review articles, images and videos posted on the platform and scrutinise their accuracy, while the charity called a “step in the right direction” for the social media company.

How does negative publicity impact the Facebook share price?

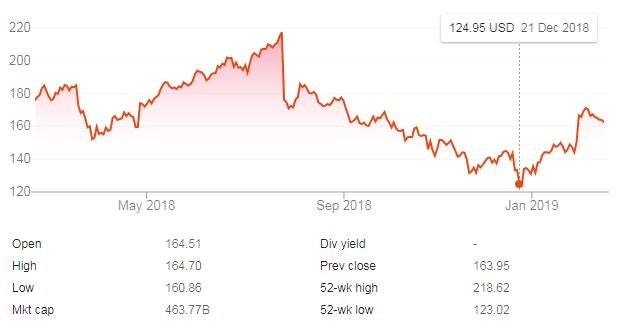

Currently, the Facebook share price sits at around the $160 mark, down from approximately $175 12 months ago. Having recovered from a December low of approximately $125, Facebook is faring well of late, but the numerous dips surrounding negative events will be a worry for the tech giant.

While there has been no impact yet, the prospect of stricter regulations and the costs that come with responding to these changes could see markets respond.

“The DCMS recommends a compulsory and legally backed code of ethics to remove fake news, disinformation and harmful content,” Holway said. “An independent regulator would be established. Fines could be levied and, in certain cases, criminal charges.

“I think the days where social media companies say that the stuff they carry ‘has nothing to do with me guv’ whilst reaping huge financial reward are coming to an end. Implementing any new regulations will cost social media companies big time.”

Tackling fake news: A mandate for all businesses

Whether the report does or doesn’t unfairly single out Facebook, responding to fake news is becoming an increasingly important issue for internet companies.

The report warns that “social media companies cannot hide behind the claim of being merely a ‘platform’ and maintain that they have no responsibility themselves in regulating the content of their sites”.

Countries such as Germany and France have already passed legislation that forces technology companies to respond to harmful content and disinformation. Breaching Germany’s Network Enforcement Act carries a potential fine of €20m if a social media platform fails to remove hate speech within 24 hours. Likewise, failing to immediately remove online articles that spread disinformation carries a prison sentence of up to one year in France.

Bhupender Singh, CEO of technology services company Teleperformance Digital Integrated Business Services, said:

“A brand’s reputation is on the line every day, with users from all over the world contributing and sharing content at the click of a button, anytime, anywhere. With the rise in social sharing and the government proposed plans, judging the appropriateness of content has become a mandate for all businesses, 24/7.”