The proposed EU Artificial Intelligence (AI) legislative framework is a landmark attempt to regulate AI. The proposal has various measures, including regulating model input data, based on which the system produces an output.

Regulating input data could improve AI explainability and reduce algorithmic bias. Unbalanced data is one of the main reasons for misrepresentations in AI models. Regulation dataset features inputted into a model explains how AI models arrive at an output.

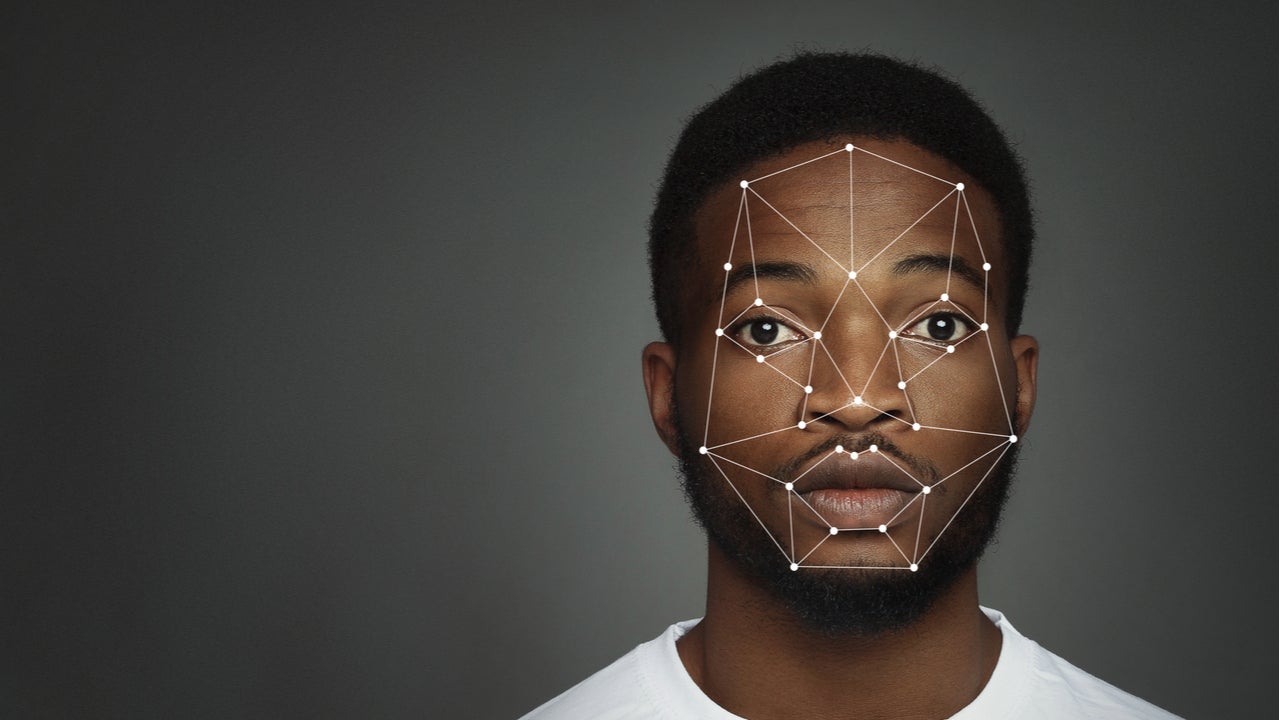

This could regulate some of the biggest ethical tech issues, such as bias in facial recognition systems, and political polarization or targeted misinformation on social media platforms like Facebook.

In the past, companies have managed to stave off official regulation, arguing innovation would be stunted by regulating AI models and platforms. This meant AI was governed by a series of disparate AI ethics and guidelines, rather than enforceable regulation.

GlobalData forecasts the market for AI platforms will reach $52bn in 2024, up from $29bn in 2019. The input-based rules offer the best method towards explainable AI to reduce algorithmic bias.

Input-based rules for ethical AI

Input data is the data directly acquired by the AI system, on the basis of which the system produces an output, as defined by the EU draft AI legislation. This is an important factor for algorithmic bias, as datasets need to be representative and balanced to produce a balanced output. Algorithms can also create biased outputs, but often unbalanced training data in the source of representation problems in AI models.

Crucially, machine learning models can change and develop, and regulating input data can control this. Article 15, Accuracy, robustness and cybersecurity, suggests ‘feedback loops’ of products already on the market should be continually addressed. This refers to possibly biased outputs, which are then used as inputs for future operations.

The Commission has kept the draft regulations streamlined, focusing on ‘high-risk AI’ use cases, that pose significant risks to the health, safety, and fundamental rights of persons, but this has the potential to be extended to non-high risk models.

Big Tech not protected by innovation measures

Big Tech are against AI legislation, arguing this would stifle the ability to innovate and produce cutting edge AI models and products. This has proved an effective argument in the past, with stakeholders wanting to avoid another AI winter.

The proposed AI legislation somewhat accounts for this: Small and Medium-Sized Enterprises (SMEs) and start-ups can use AI regulatory sandboxes, a controlled environment testing new AI models for a limited time. However, Big Tech will not receive the same treatment.

Executive Vice-President for a Europe fit for the Digital Age Margrethe Vesteger said the draft AI legislation is “future-proof and innovation-friendly,” and “our rules will intervene where strictly needed: when the safety and fundamental rights of EU citizens are at stake”.

Regulation is solidifying previous guidelines

Different company AI ethics and guidelines have limited success in ensuring the application of ethical AI standard across companies globally. The EU AI legislation would operate like the existing General Data Protection Regulation (GDPR). Any company with AI model output affecting European citizen’s data will be forced to comply.

Companies breaching this regulation could be fined up to 6% of global turnover. This means any company operating within the EU, or utilizing EU citizens’ data, would be forced to comply, creating the foundations for a global standard for ethical AI.

The EU draft AI legislation does bear some resemblance to previous guidelines, like the UK House of Lord’s AI Select Committee’s AI in the UK: reading, willing and able?. This recommended a mixture of technical transparency and explainability to regulate AI. The report significantly comments that transparency of AI algorithms does not include the input data, meaning a system may behave differently with different datasets.

Big Tech will unsurprisingly be the most affected by these rules, with no flexibility for new models and innovations, unlike start-ups and SMEs. Input data regulation is one of the best ways to avoid bias, checking for a representative dataset which amounts to a representative output, avoiding algorithmic bias.