Auction house Christie’s made headlines last year with the record-breaking $450m sale of Leonardo da Vinci’s Salvator Mundi. Tomorrow’s sale of the Generative Adversarial Network’s Portrait of Edmond Belamy will likely be a far less significant event, but it could signal a changing of the guard in the art world as the Old Masters give way to a technology-dominated future.

The sale of Portrait of Edmond Belamy will be another huge milestone for Christie’s. This will be the first time in history that a piece of artwork generated by artificial intelligence (AI) will go up for auction.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

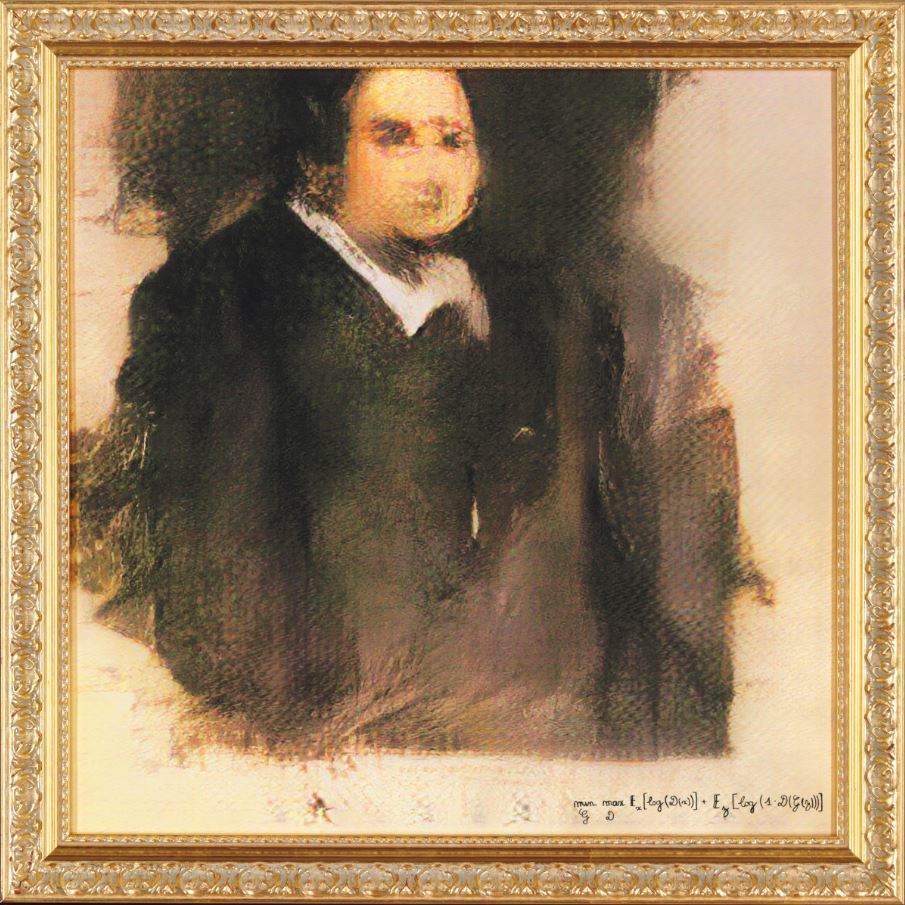

The picture depicts a blurred man in a white shirt and dark jacket, standing off centre. The piece is signed in the bottom right corner by “min max Ex[log(D(x))] + Ez[log(1-D(G(z)))].”.

The piece, created by French-based art collective Obvious, is one of a many portraits depicting a fictional family known as the Belamy’s.

Portrait of Edmond Belamy is being sold as part of Christie’s Prints & Multiples auction, which is taking place in New York between 23-25 October. The piece will be go under the hammer on Thursday evening, with the auction house expecting it to fetch between $7,000 and $10,000.

“It may not have been painted by a man in a powdered wig, but it is exactly the kind of artwork we have been selling for 250 years,” said Richard Lloyd, Christie’s sale organiser.

How was the AI painting, Portrait of Edmond Belamy, created?

Artists Hugo Caselles-Dupré, Pierre Fautrel and Gauthier Vernier created the painting by feeding a set of 15,000 historic portraits, painted between the years 1300 and 2000, into a generative adversarial network, a type of AI algorithm that uses machine learning to generate and evaluate images.

One side of the algorithm, known as the Generator, creates an image. The second side, the Discriminator, attempts to distinguish the AI-generated image from those created by humans.

“The Generator makes a new image based on the set, then the Discriminator tries to spot the difference between a human-made image and one created by the Generator,” Caselles-Dupré told Christie’s.

“The aim is to fool the Discriminator into thinking that the new images are real-life portraits. Then we have a result.”

Of course, the model is far from perfected, and the algorithm was seemingly easier to convince than a human. This is what caused the distorted look of the portrait, Caselles-Dupré said.

“It is an attribute of the model that there is distortion. The Discriminator is looking for the features of the image – a face, shoulders – and for now it is more easily fooled than a human eye.”