The Foundation for Responsible Robotics (FRR) has announced it will be launching a quality mark for robotics and artificial intelligence to promote transparency and trust in AI products.

The not-for-profit think tank will ensure AI or robotics products match a set of standards to receive the quality mark. Criteria will include environmental protection, sustainability, worker treatment, safety and security.

Access deeper industry intelligence

Experience unmatched clarity with a single platform that combines unique data, AI, and human expertise.

The FRR, in partnership with professional services network Deloitte, will give the product a rating out of three. The quality mark will take the shape of a small sticker on robots and be visible on related marketing websites for robots or algorithms.

“Our goal is to shape a culture of responsible development of AI and robotics to promote public good and a better life for us and generations to come,” said Aimee van Wynsberghe, co-director at the FRR.

Deloitte will provide its experience in auditing, risk analysis and legal advice, as well as their experience in technological innovation.

“By contributing our knowledge and experience in auditing, innovation and AI we can help to create trust in the chain from producer to consumer,” said Deloitte partner and innovation leader Marc Verdonk.

Growing call for responsible robotics

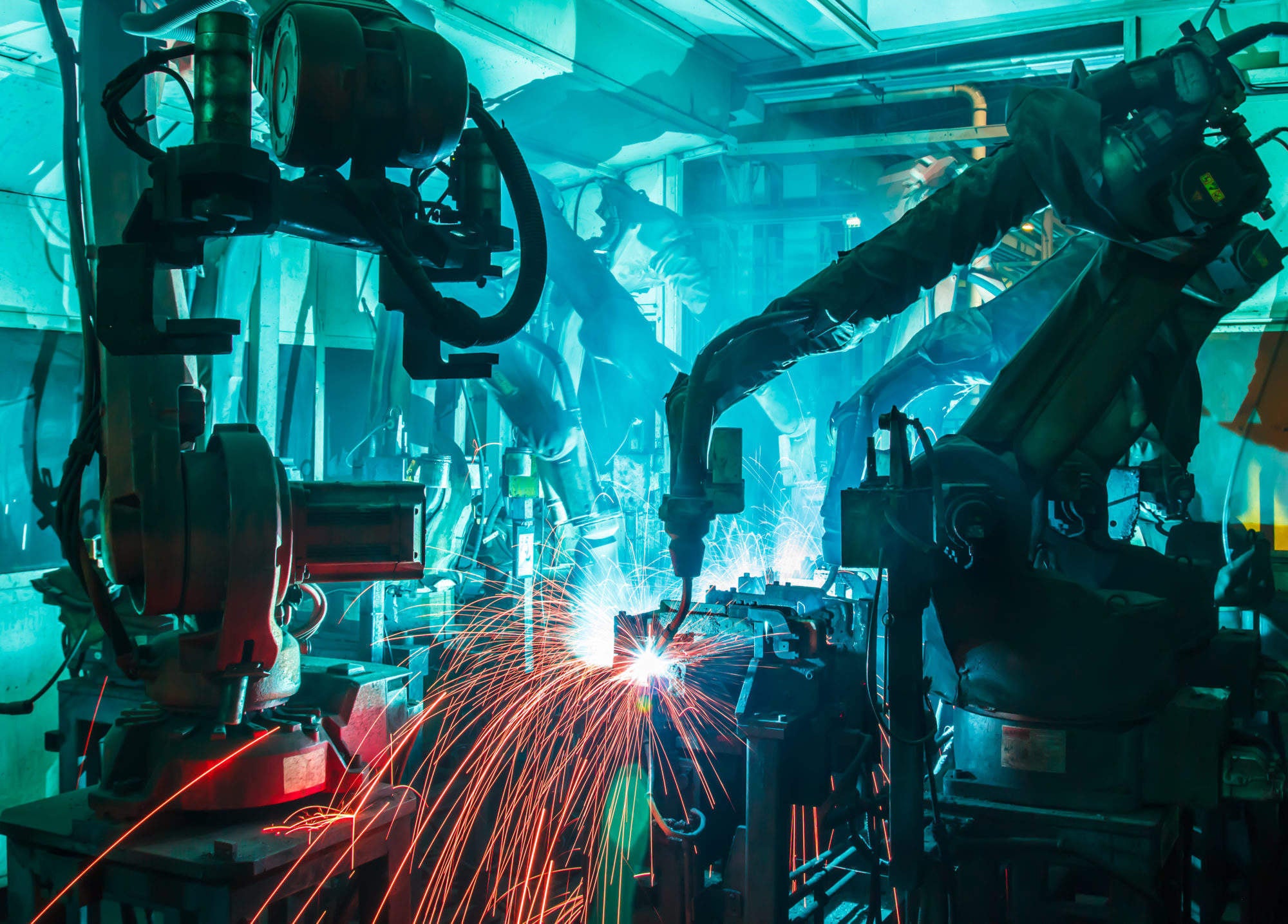

Robots are increasingly being used throughout society, whether it’s a virtual assistant in your car or collaborative robots that work alongside humans in industry.

Recently, the EU passed a resolution supporting a ban on the use of weapons that kill autonomously, reflecting a growing trend towards preemptive regulations on robots.

It is hoped that the quality mark for responsible robotics will give consumers peace of mind that the product has been created ethically.

For producers of AI-related products, the FRR intends to work with companies to help them create products that meet the criteria.

Professor Noel Sharkey, co-director of the Foundation for Responsible Robotics told Verdict:

“A big concern is how much a company has considered the societal impact of its product. This is strong FRR territory.

“Is the company designing the product with the societal and ethical issues in mind or are they leaving that until they are challenged later? Will the product impact adversely on human rights such as privacy, well-being and autonomy?”

The FRR is looking at a “small and diverse group” of companies to pilot the certification. These companies will be in the field of robotics, algorithmic decision making and machine learning.

Sharkey told Verdict that he hopes this will be operational within the next year.

“Society could reap enormous benefits from AI and Robotics, but only if we get it right,” he said.

“We need to counter the scare stories, and the hype, or risk a public backlash. We must offer the public a mark of quality that helps them to make informed decisions.”

Read more: No killer robot ban a “danger to humanity” warns Noel Sharkey